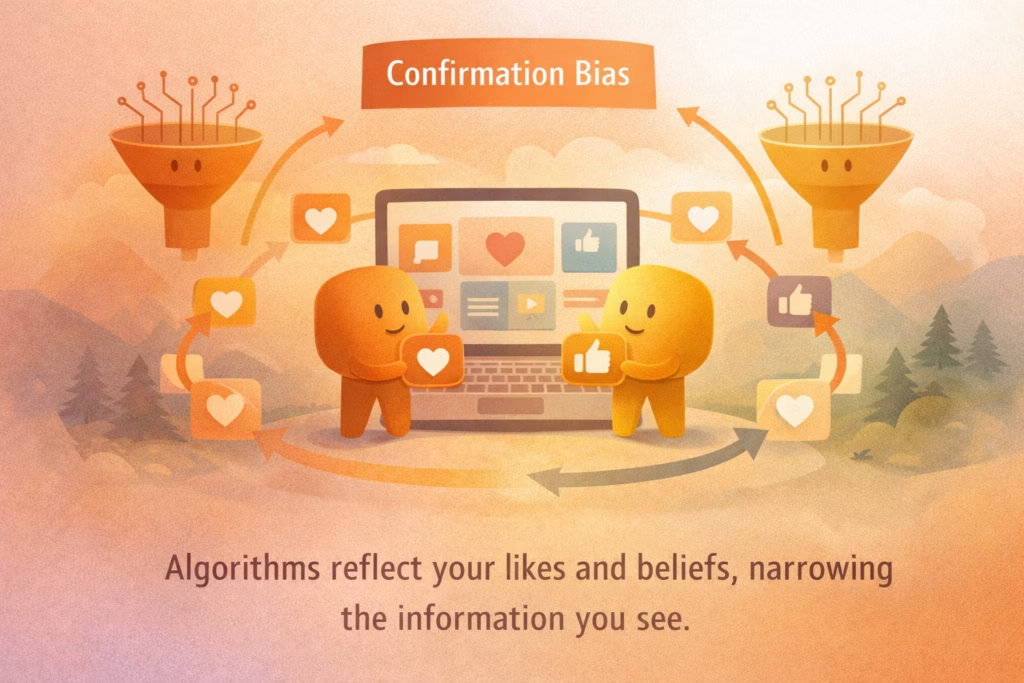

Confirmation bias in technology happens when algorithms repeatedly show you information that matches what you already believe or prefer. These algorithms are step-by-step systems that analyze your behavior—such as clicks, likes, and watch time—to predict what you want to see next. Over time, this narrows your exposure to different viewpoints, so you often see more of what already feels familiar. When we explore this together, you begin to see how your everyday choices and our shared digital systems shape the information environment we all move through.

Why does this matter for you and for the communities you care about? Much of our daily information flows through digital platforms that influence how we connect, learn, and understand one another. When engagement-based systems prioritize content similar to your past behavior, they amplify existing preferences. This can make certain ideas feel more common or more widely accepted than they may actually be. By recognizing this pattern together, we build shared awareness, strengthen trust in our own thinking, and support a more open and balanced exchange of ideas.

In this guide, you will learn how recommendation systems use engagement signals, how AI training data encodes human assumptions, and how repeated exposure strengthens perceived reality. As we walk through these ideas side by side, you will also find practical steps you can take to broaden your digital perspective. Together, these insights help you feel more supported and confident as you engage with technology in your daily life.

How Technology and AI Reinforce Confirmation Bias

Technology operationalizes confirmation bias by turning behavior into measurable data. When you frequently click on articles that support a specific viewpoint, a recommendation system records that interaction. Because engagement signals interest, the system presents more content aligned with that pattern. Over time, your feed becomes increasingly tailored, and similar ideas appear dominant while alternative perspectives appear less visible. As we reflect on this process together, you can see how your actions and the platform’s design work in relationship, shaping not only your experience but also the broader digital community we share.

AI systems are trained on historical data and optimized for goals such as engagement and interaction. If the data reflects existing human preferences or assumptions, those patterns are embedded into outputs. Because the system responds automatically, reinforcement can feel neutral. In practice, a feedback loop forms: beliefs influence clicks, clicks influence recommendations, and recommendations strengthen beliefs. When we understand this loop together, you gain clarity about how your engagement and the system interact, helping you approach your feed with awareness, care, and a sense of shared responsibility.

- Recommendation Systems and Engagement Signals

Recommendation systems learn directly from your behavior. Every click, pause, or share acts as feedback that shapes future suggestions. If you regularly watch videos from one perspective, the system increases similar recommendations because engagement is its optimization goal. You can help guide this process by intentionally interacting with diverse content and following varied sources. When you do, you not only expand your own view, but also contribute to a more inclusive and balanced digital community. - Training Data and Embedded Assumptions

AI models are trained on historical datasets that reflect real-world human behavior. If those datasets include one-sided patterns or assumptions, the AI mirrors them in its outputs. Content that historically generates strong reactions may be prioritized because it meets engagement goals. As you engage with AI-generated recommendations, you can pause and ask whether the output reflects data patterns rather than balanced evaluation. This small moment of reflection supports thoughtful participation and helps strengthen a culture of shared learning. - Repetition and Perceived Consensus

Repeated exposure increases familiarity, and familiarity feels like truth. When similar ideas appear consistently in your feed, they seem widely accepted. This effect grows when alternative viewpoints appear less often. Together, we can gently interrupt this cycle by actively searching for different perspectives instead of relying only on automated recommendations. In doing so, you help create a more open, respectful, and connected conversation within your community.

See Beyond Algorithmic Echo Chambers

Start by observing your digital habits with curiosity and care. Notice what types of content you repeatedly engage with and how your feed evolves over time. Experiment with following new sources or exploring topics outside your usual interests. As you understand how algorithms respond to your behavior, you gain meaningful influence over your information environment and help foster a healthier, more connected digital community for all of us.